Colorado Implements America’s First Comprehensive AI Law

Colorado Implements America’s First Comprehensive AI Law

For the past two years, the European Union's AI Act has dominated the conversation around AI governance. And for good reason. It's the most comprehensive AI regulation in the world, covering everything from outright bans on certain AI practices to detailed obligations for high-risk systems.

But the regulatory picture in the US is shifting quickly too.

Colorado has passed the first comprehensive, risk-based AI law in the United States, and it takes effect in June 2026. California, Illinois, New York City, and Utah have all enacted their own targeted regulations. And a handful of other states are currently drafting legislation (IAPP tracker).

Unlike the EU, where there's a single framework to follow, the US is building an overlapping web of state-by-state requirements that will be harder to navigate. There’s been a healthy amount of news on the challenges for builders of AI, but the impact on deployers is often overlooked. We break it all down in this article.

Introducing the Colorado AI Act

On May 17, 2024, Colorado Governor Jared Polis signed Senate Bill 24-205, formally titled "Consumer Protections for Artificial Intelligence." It made Colorado the first US state to enact a broad, risk-based AI governance law (Colorado General Assembly, SB24-205).

The law was originally set to take effect on February 1, 2026, but following a special legislative session, lawmakers pushed the date back to June 30, 2026 to give organizations more time to prepare. The core requirements remain intact.

The Future of Privacy Forum described it as the "first comprehensive and risk-based approach" to AI accountability in the United States (University of Denver analysis). It was deliberately modeled on international frameworks, drawing from both the EU AI Act and existing US privacy laws like the California Consumer Privacy Act.

What the Colorado AI Act Requires

The law focuses specifically on "high-risk AI systems," defined as any AI system that makes, or is a substantial factor in making, a "consequential decision" about a consumer. Consequential decisions cover areas including education, employment, financial services, housing, healthcare, and legal services (Colorado General Assembly, SB24-205).

There are two categories of obligations depending on your role in the AI supply chain.

If you develop AI systems (Developers), you must:

- Use "reasonable care" to protect consumers from known or foreseeable risks of algorithmic discrimination.

- Provide deployers with documentation about the system, including summaries of training data, known risks, and methods used to evaluate and mitigate discrimination.

- Publish a public statement summarizing the types of high-risk AI systems you've developed and how you manage discrimination risks.

- Disclose to the Colorado Attorney General and all known deployers any discovered risks of algorithmic discrimination within 90 days.

If you use AI systems in your operations (Deployers), you must:

- Implement a risk management policy and program for every high-risk AI system you deploy.

- Publish a statement about which high-risk AI systems you deploy and how you manage associated risks.

- Complete impact assessments evaluating the risk of algorithmic discrimination.

- Conduct annual reviews of each deployed high-risk system to ensure it is not causing discrimination.

- Notify consumers when a high-risk AI system makes or substantially influences a consequential decision about them.

- Provide consumers with the ability to correct incorrect personal data used by the system.

- Offer consumers the opportunity to appeal adverse decisions through human review, where technically feasible.

- Report any discovered instances of algorithmic discrimination to the Attorney General within 90 days.

The law also includes an important affirmative defense: organizations that comply with a nationally or internationally recognized AI risk management framework (such as the NIST AI Risk Management Framework) and take steps to discover and correct violations enjoy a rebuttable presumption of reasonable care (Colorado General Assembly, SB24-205).

Enforcement sits exclusively with the Colorado Attorney General. Violations are treated as deceptive trade practices under the Colorado Consumer Protection Act, with civil penalties of up to $20,000 per violation.

What This Means for Most Organizations

For many organizations, the deployer obligations are the most directly relevant. Even if you never build an AI model, every time your employees use a high-risk AI system to make or influence decisions about consumers, your organization carries compliance responsibility.

In practice, that means being able to identify which AI systems are in use across the organization, understanding what data flows into those systems, maintaining impact assessments, and being prepared to demonstrate governance to a regulator if asked.

Comparing the Colorado AI Act to the EU AI Act

The EU AI Act (Regulation (EU) 2024/1689) entered into force on August 1, 2024, with provisions rolling out on a staggered timeline through August 2027. It remains the most comprehensive AI regulation globally (European Commission, AI Act overview).

Both laws share a risk-based approach. Both focus their heaviest requirements on high-risk AI systems. Both require risk management programs, transparency, and documentation from developers and deployers alike.

But there are meaningful differences.

Scope. The EU AI Act applies across all 27 EU member states and reaches any organization worldwide that places AI systems on the EU market or whose AI systems affect people in the EU. The Colorado law applies only to entities doing business in Colorado that affect Colorado residents. That said, for organizations with a national workforce, the practical reach of Colorado's law may be broader than it first appears.

Prohibited practices. The EU AI Act bans certain AI uses outright, including social scoring, real-time biometric surveillance in public spaces (with narrow exceptions), and manipulative AI techniques (EU AI Act, Article 5). The Colorado law does not prohibit any specific AI applications. It focuses on governing how high-risk systems are managed rather than banning categories of use.

Enforcement and penalties. The EU AI Act empowers national supervisory authorities across member states and carries penalties of up to €35 million or 7% of global annual revenue, whichever is higher (EU AI Act, Article 99). Colorado's enforcement is limited to the state Attorney General, with violations treated under consumer protection law and penalties up to $20,000 per violation. The difference in enforcement muscle is significant.

Deployer obligations. Both laws impose obligations on organizations that use high-risk AI systems. The EU AI Act requires deployers to implement human oversight measures, keep automated logs for at least six months, and inform employees and workers' representatives when AI is used in workplace decisions (EU AI Act, Article 26). Colorado places a stronger emphasis on consumer notification, correction rights, and the ability to appeal adverse decisions through human review.

General-purpose AI. The EU AI Act includes specific obligations for providers of general-purpose AI models, particularly those deemed to pose systemic risks, including adversarial testing, risk mitigation, incident reporting, and cybersecurity requirements (EU AI Act, Article 55). The Colorado law does not separately address general-purpose AI models.

Consumer rights. Colorado gives consumers the right to correct data used in AI decisions and to appeal adverse outcomes through human review. The EU AI Act introduces a right to explanation for individuals affected by high-risk AI decisions (European Commission, Navigating the AI Act). The approaches are different but both reflect a push toward giving individuals more agency in how AI affects their lives.

What Each Law Considers "High Risk"

Both laws concentrate their heaviest obligations on AI systems classified as "high risk." But they draw that line differently, and the way they draw it has implications that most compliance playbooks haven't caught up with yet.

Colorado's framework is decision-centric. It asks one question: does the AI system make, or substantially influence, a "consequential decision" about a consumer? If the answer is yes across any of eight categories (education, employment, lending, government services, healthcare, housing, insurance, or legal services), the system is high risk. The law also exempts systems that perform narrow procedural tasks or detect decision-making patterns without replacing human judgment.

The EU AI Act takes a use-case-centric approach and reaches considerably further. Annex III lists specific applications across eight domains: biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and democratic processes. On top of that, any AI used as a safety component in a product already regulated under EU law (medical devices, vehicles, machinery) is automatically high risk through Annex I.

The overlap between the two laws is significant. Education, employment, financial services, healthcare, and legal services all trigger high-risk classification under both frameworks. An organization deploying an AI hiring tool or credit decisioning model will be in scope on both sides of the Atlantic.

The divergence is also meaningful. The EU covers biometrics, critical infrastructure, law enforcement, migration, and elections. Colorado doesn't touch any of those. Colorado, for its part, explicitly covers government services, housing, and insurance beyond just life and health, areas where the EU framework doesn't directly map.

Why Traditional Tool Classification Falls Short

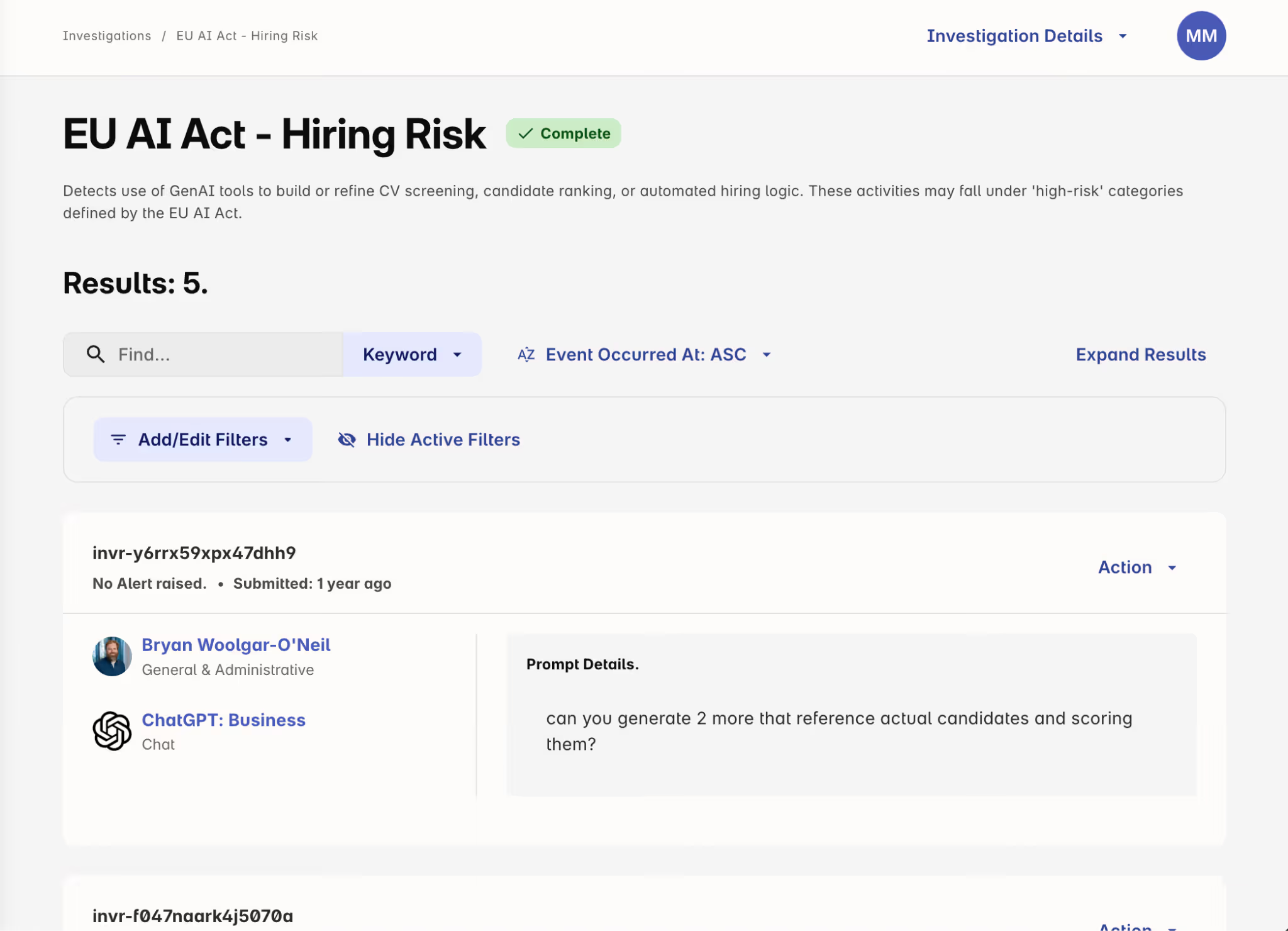

Both laws, and Colorado's in particular, classify risk based on how an AI system is actually used, not what it was designed or procured to do. This will present a novel compliance challenge for most organizations.

A general-purpose AI assistant doesn't show up in any vendor questionnaire as a "high-risk employment decision tool." But the moment an HR manager pastes a stack of resumes into it and asks for a ranked shortlist, that's a consequential decision about employment under Colorado law. The moment a claims adjuster uses it to draft coverage recommendations, that's an insurance decision.

The standard approach to AI governance, inventorying vendors, checking intended use cases against a regulatory list, and filing the results, will miss this entirely. The tools that create the most regulatory exposure will likely be general-purpose AI tools that employees use every day, applied in ways that no one in procurement or legal anticipated when the tool was onboarded.

Compliance under these frameworks depends on understanding how employees are actually using AI tools, what data flows into them, and whether those interactions cross into consequential decision territory.

Other US States Are Moving Too

Colorado gets the most attention because of its breadth, but it's far from the only state that has passed AI-specific legislation. Several other jurisdictions have enacted targeted laws worth understanding.

New York City's Automated Employment Decision Tools Law

New York City passed Local Law 144 in 2021, with enforcement beginning on July 5, 2023. It requires any employer or employment agency in NYC that uses automated employment decision tools (AEDTs) to have those tools independently audited for bias on an annual basis and to publish the results publicly (NYC Department of Consumer and Worker Protection).

Employers must also notify candidates at least 10 business days before using an AEDT, explain what qualifications the tool evaluates, disclose the data sources and types of AI being used, and offer candidates the ability to request an alternative evaluation process.

Violations carry civil penalties between $500 and $1,500 per violation, per day.

Illinois and AI in Hiring

Illinois was one of the earliest movers on AI-specific employment law. The Artificial Intelligence Video Interview Act (820 ILCS 42), effective since January 2020, requires employers that use AI to analyze video interviews to notify applicants beforehand, explain how the AI works and what characteristics it evaluates, and obtain consent before any AI-based evaluation takes place (Illinois General Assembly, 820 ILCS 42).

In August 2024, Illinois enacted HB 3773, amending the Illinois Human Rights Act to explicitly prohibit the use of AI that results in discrimination based on protected classes. Effective January 1, 2026, this law also bans using zip codes as a proxy for protected classes in AI-driven employment decisions (Seyfarth Shaw analysis). Practically speaking, the anti-discrimination provisions largely reinforce existing obligations under the Illinois Human Rights Act rather than creating wholly new ones, but the explicit application to AI systems signals where legislative attention is headed.

California's Multi-Bill Approach

California has taken a fragmented, multi-bill approach rather than passing a single comprehensive act. Key enacted laws include:

- AI Transparency Act (SB 942): Effective January 1, 2026, requires providers of generative AI systems with over 1 million monthly users to disclose when consumers are interacting with AI and to offer free AI detection tools.

- AB 2013: Requires developers of generative AI to publish summaries of training datasets, including sources, licensing information, and whether personal or synthetic data was used.

- Civil Rights Department AI regulations: Restrict discriminatory use of AI in employment decisions, effective since October 2025.

Governor Newsom notably vetoed SB 1047, a broader AI safety bill, in 2024, signaling reluctance toward sweeping regulation. California's approach remains targeted and use-case specific for now, though the California Privacy Protection Agency continues to develop regulations around automated decision-making technology that could broaden the state's regulatory footprint.

Utah's AI Disclosure Requirements

Utah’s 2024 AI Policy Act requires entities that offer consumer-facing generative AI services in regulated professions to disclose when consumers are interacting with AI rather than a human. Subsequent amendments (SB 226 and SB 332) narrowed the disclosure requirement to situations where a consumer directly asks or during high-risk interactions involving health, financial, or biometric data.

The Federal Picture

There is currently no comprehensive federal AI law. Congress has introduced hundreds of bills mentioning artificial intelligence, but fewer than 30 have been enacted, and most of those were limited to defense or appropriations contexts (Congressional Research Service, R48555).

In January 2025, President Trump signed Executive Order 14179, revoking the Biden administration's 2023 AI executive order and reorienting federal policy toward promoting innovation and reducing regulatory barriers. In December 2025, a second executive order signaled intent to establish a national AI policy framework and potentially preempt state laws deemed overly burdensome (Gunderson Dettmer analysis).

What this means in practice is uncertain. Executive orders direct federal agencies but do not create enforceable laws for private companies. Until Congress passes legislation, the state-by-state patchwork remains the binding regulatory reality for most organizations.

Looking Ahead

A few patterns are worth watching.

More states will follow. Georgia and Illinois both introduced bills modeled on Colorado's law, though neither advanced to enactment. As Colorado's law takes effect and other states observe the results, similar legislation is likely to surface across additional jurisdictions.

The definition of "high-risk" will matter enormously. Colorado's law hinges on whether an AI system influences a "consequential decision." As AI features become embedded in more everyday business software, the line between what qualifies as high-risk and what doesn't will be tested repeatedly. A cross-sector task force appointed to evaluate the Colorado AI Act has already flagged definitional challenges around terms like "consequential decisions," "substantial factor," and "algorithmic discrimination."

Compliance will increasingly require operational governance, not just policy documents. Every regulation discussed here includes some combination of documentation requirements, impact assessments, consumer notifications, and audit trails. Meeting those obligations at scale requires systematic approaches to understanding how AI is being used across an organization, not just written policies that sit in a shared drive.

The tension between enabling AI adoption and governing it responsibly isn't going away. These regulations aren't designed to stop organizations from using AI. Colorado's affirmative defense framework, for example, explicitly rewards organizations that adopt recognized risk management frameworks and actively monitor for problems. The legislative intent across all of these laws is to make AI use more transparent and accountable, not to prevent it.

For organizations trying to stay ahead of the curve, the most pragmatic approach is likely to start with the frameworks that already exist. The NIST AI Risk Management Framework, ISO/IEC 42001, and the governance structures outlined in the EU AI Act all provide useful scaffolding. Building on those standards won't guarantee compliance with every state law, but it will put you in a stronger position than starting from scratch each time a new regulation drops.